A service mesh is a dedicated infrastructure layer that facilitates service-to-service communications in a microservices architecture, handling service discovery, load balancing, failure recovery, metrics, and monitoring. By abstracting these functionalities, a service mesh allows developers to focus on business logic in their services, enhancing their productivity.

As microservices proliferate, coordinating, managing, and ensuring seamless communication between them becomes a complex task. The service mesh addresses these challenges by providing a unified, application-agnostic framework that simplifies and standardizes inter-service communication, making it robust, secure, and observable.

How Service Meshes Work

Service meshes operate at the application network level. This model decouples the networking logic from the application logic, thus avoiding a tangled mess of point-to-point communication paths.

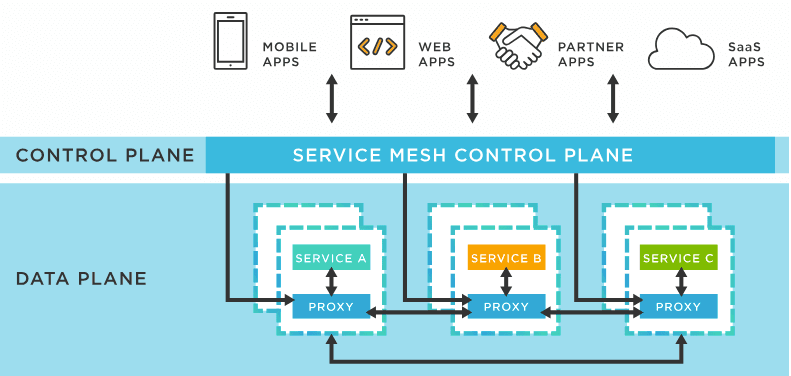

Service meshes can be divided into two main components: the data and control planes.

Data Plane

The data plane, often implemented with sidecar proxies, is responsible for the direct routing, control, and transformation of network packets. When a service wants to communicate with another, it sends the request to its sidecar proxy, which handles the communication with the corresponding proxy of the target service. The sidecar proxy pattern helps developers ensure that the infrastructure handling service-to-service communication is separate from the services themselves.

Control Plane

The control plane, on the other hand, is responsible for controlling the behaviour of the data plane. It configures the proxies and extracts telemetry data from them, which it then uses to provide observability. The control plane provides a bird's-eye view of the entire mesh and is crucial for managing and monitoring the data plane.

Benefits of Service Meshes

Service meshes provide numerous benefits:

- Observability: Service meshes provide in-depth observability into the service ecosystem, providing critical insights into performance, failures, and network behaviour. This feature helps developers and operators understand the interdependencies between services, detect anomalies, and troubleshoot issues.

- Security: Service meshes enhance security by enforcing access policies, performing traffic encryption, and ensuring secure service-to-service communication.

- Traffic Management: They provide advanced traffic management capabilities, such as load balancing, routing, and circuit breaking.

- Resiliency: Service meshes improve the system's resiliency by providing inbuilt failure recovery features, including retries, timeouts, and failovers.

- Tracing and Monitoring: They support distributed tracing and provide real-time monitoring, enhancing the visibility of service performance and interaction patterns.

Popular Service Mesh Examples

Istio

Istio is a popular open-source service mesh that provides a robust way to connect, manage, and secure microservices. Istio integrates with Kubernetes and runs in various environments, including on-premises, cloud-hosted, in Kubernetes containers, and services running on virtual machines.

Linkerd

Linkerd is a light, fast, and security-focused service mesh for Kubernetes. It features a simple and straightforward setup, with robust capabilities like load balancing, retries, timeouts, and a dashboard for observability.

Consul Connect

Consul Connect, developed by HashiCorp, provides service-to-service connection authorization and encryption using mutual Transport Layer Security (TLS). With its service discovery and health-checking features, Consul Connect is a powerful tool for building a secure, agile, and resilient microservice architecture.

Advanced Service Mesh Features

Beyond the fundamental capabilities, many service meshes offer advanced features such as:

- Canary Releases and Blue/Green Deployments: Service meshes allow developers to gradually roll out service changes, reducing the risk of system-wide failures caused by problematic updates. They can split traffic between different service versions, helping teams to test and roll out new features gradually or switch between different environments smoothly.

- Rate Limiting: This feature helps protect your services from being overwhelmed by too many requests. It allows developers to limit the number of requests a service can make or accept, ensuring the service is manageable.

- Service Mesh Interface (SMI): SMI is a standard interface for service meshes on Kubernetes. It defines a set of common, portable APIs that provide interoperability between different service mesh technologies. This means that applications can change the service mesh underneath without any code changes.

- Fault Injection: Service meshes allow developers to inject faults into the system intentionally, simulating failures of services or networks. This functionality helps in testing the system's resilience and failure recovery mechanisms.

Practical Example: Implementing Istio in a Microservices Architecture

Istio leverages an extended version of the Envoy proxy, a high-performance, open-source edge and service proxy that makes the network transparent to applications. This guide will walk you through the process of integrating Istio into your microservice applications.

Istio Installation

The first step is to install Istio on your Kubernetes cluster. There are a few different ways to do this, but the simplest is to use the Istio command-line tool, istioctl. You can download the latest Istio release from the official Istio website.

- After downloading and extracting the package, navigate to the directory in your terminal and add the

istioctlclient to your PATH:

cd istio-<version>

export PATH=$PWD/bin:$PATH

2. You can now install Istio using the istioctl install command. This will install Istio using the default profile, which is suitable for most use cases:

istioctl install

3. Verify the installation by checking that the necessary Istio pods are running in the istio-system namespace:

kubectl get pods -n istio-system